Recent works have shown that generative models leave

traces of their underlying generative process on the generated samples, broadly referred to as fingerprints

of a

generative model, and have studied their utility in detecting

synthetic images from real ones. However, the extend to

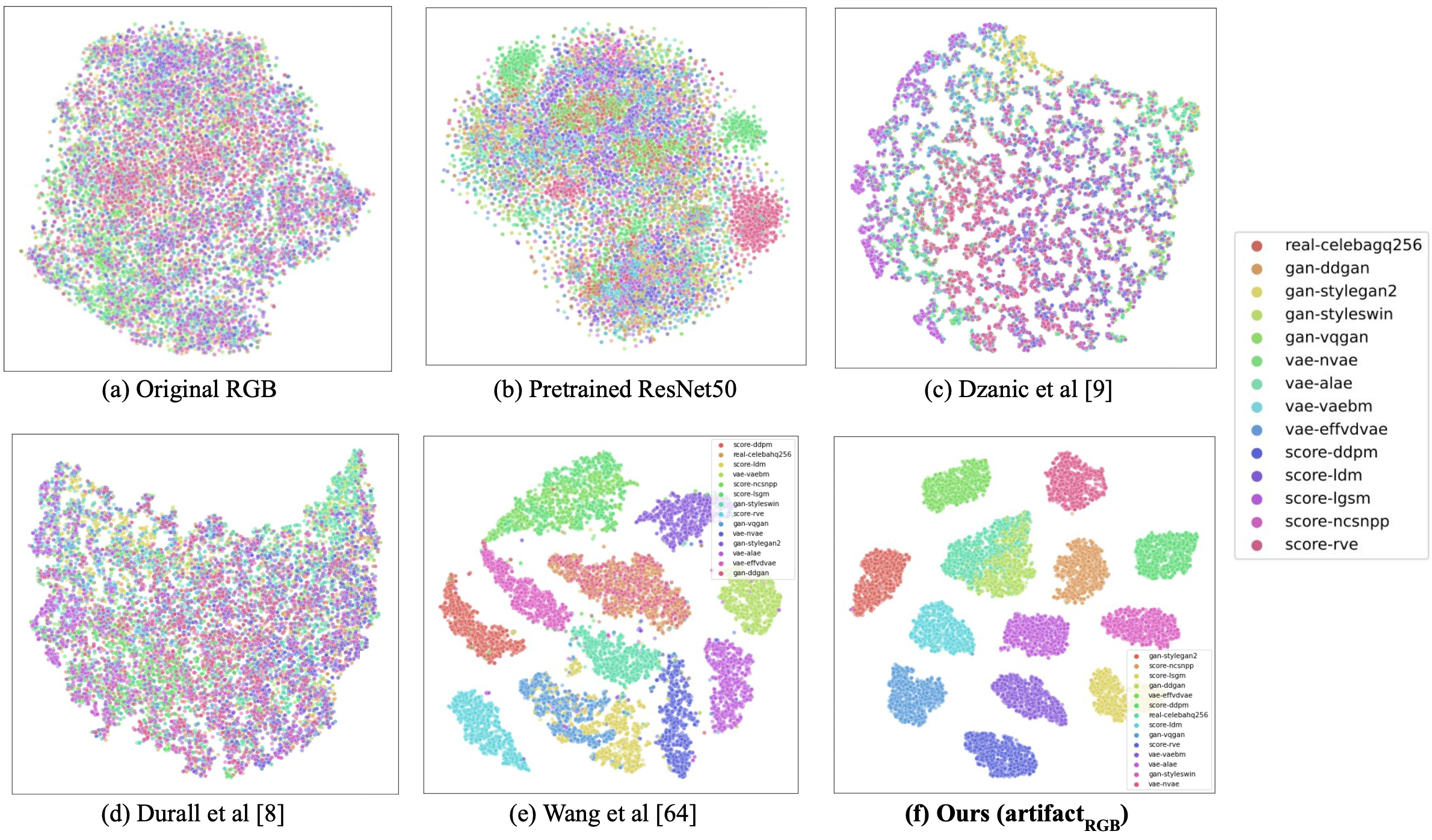

which these fingerprints can distinguish between various

types of synthetic image and help identify the underlying

generative process remain under-explored. In particular,

the very definition of a fingerprint remains unclear, to our

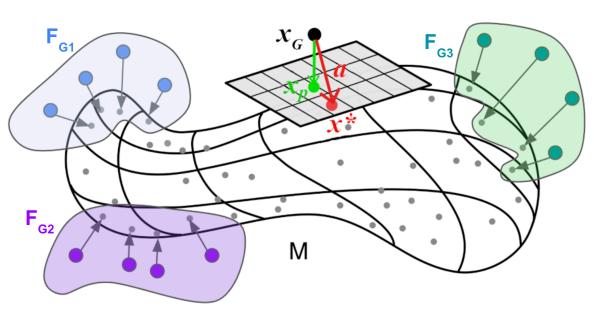

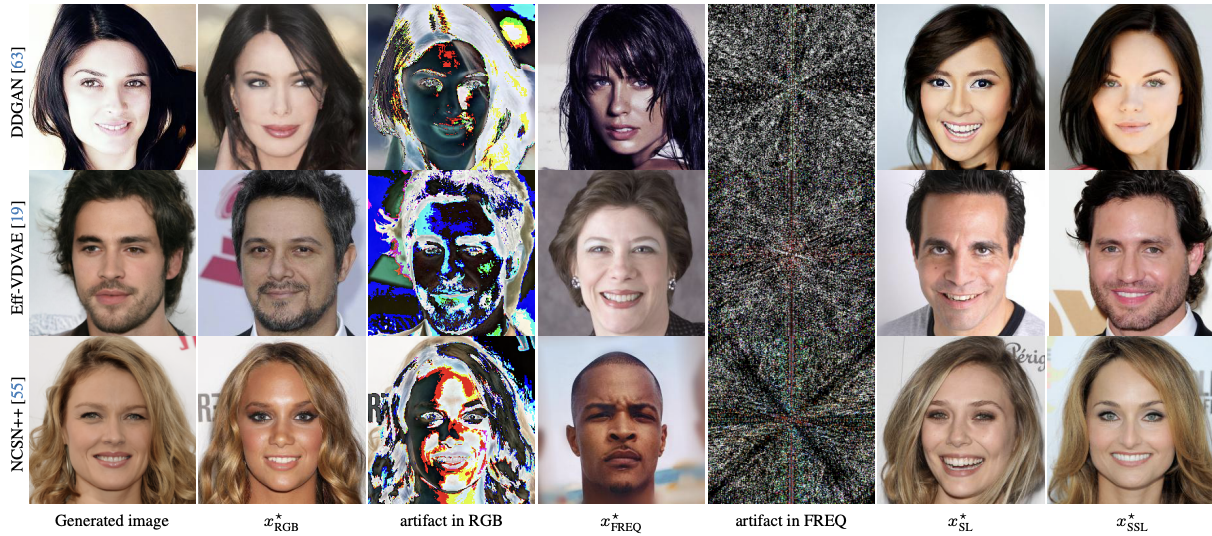

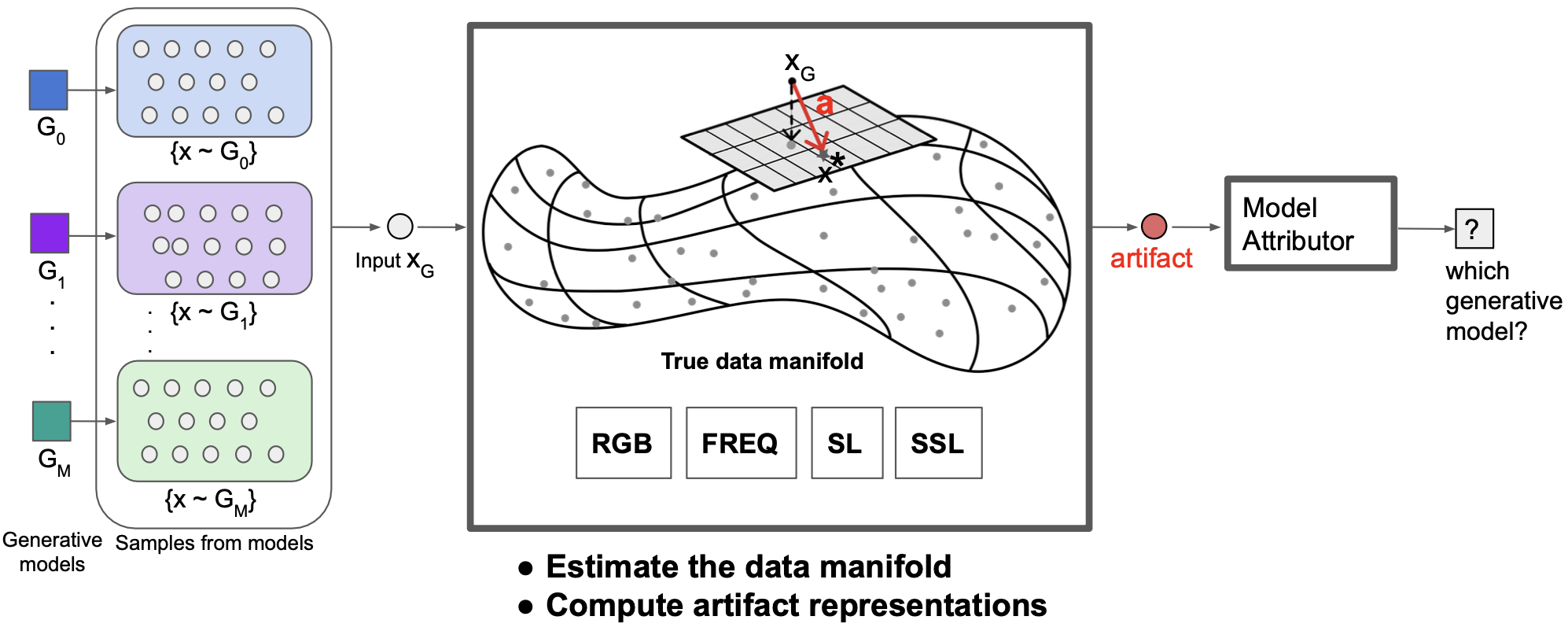

knowledge. To that end, in this work, we formalize the definition of artifact and fingerprint in generative

models,

propose

an algorithm for computing them in practice, and finally

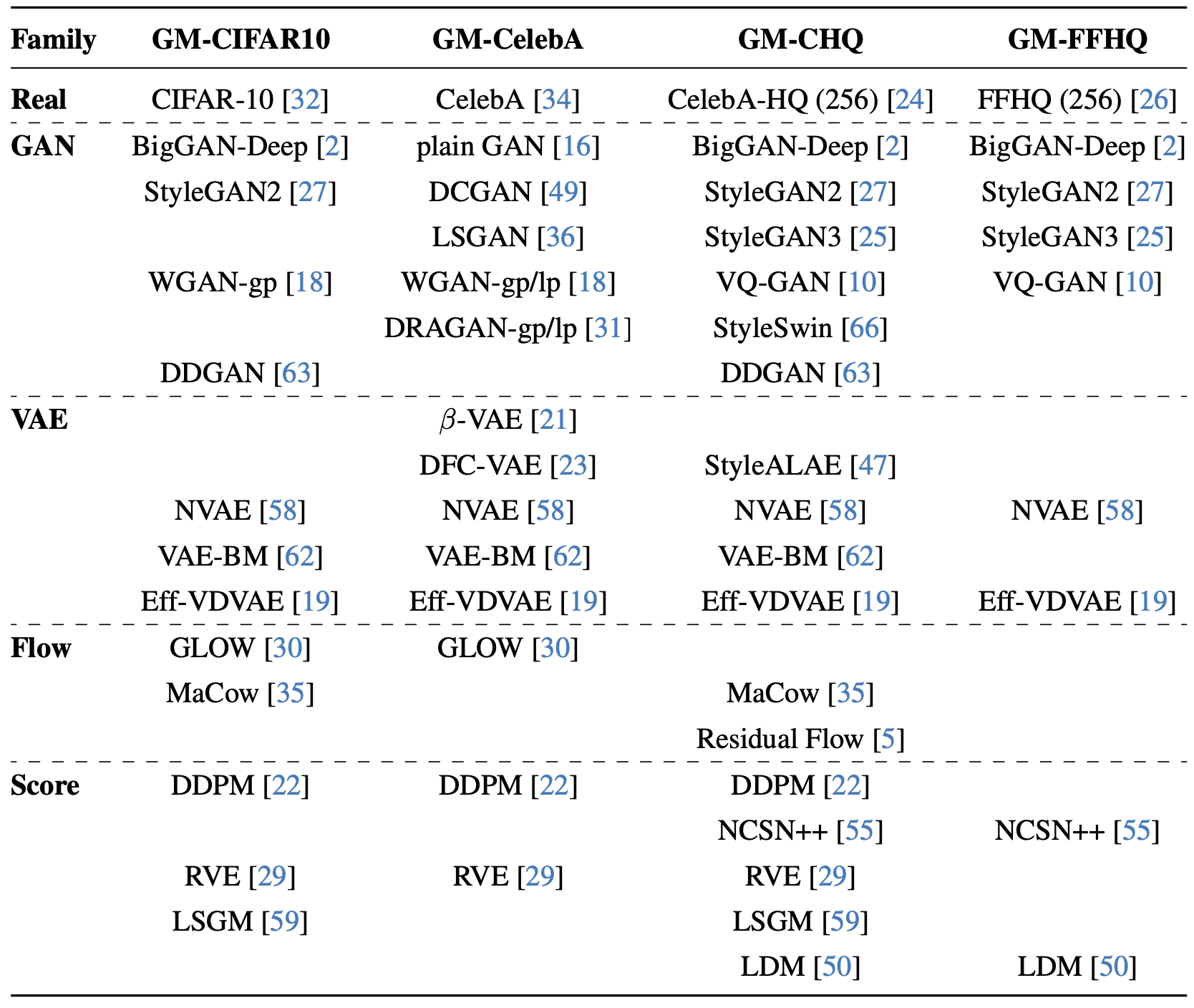

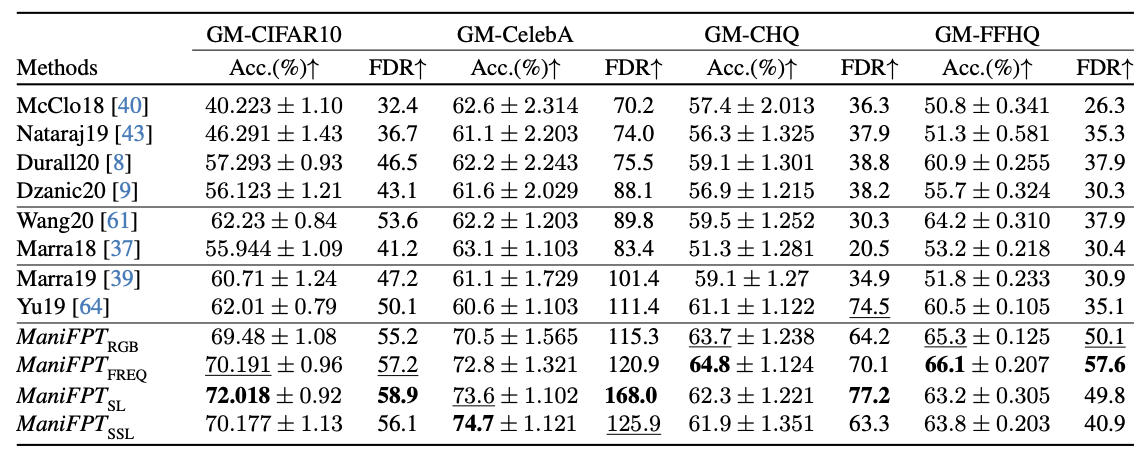

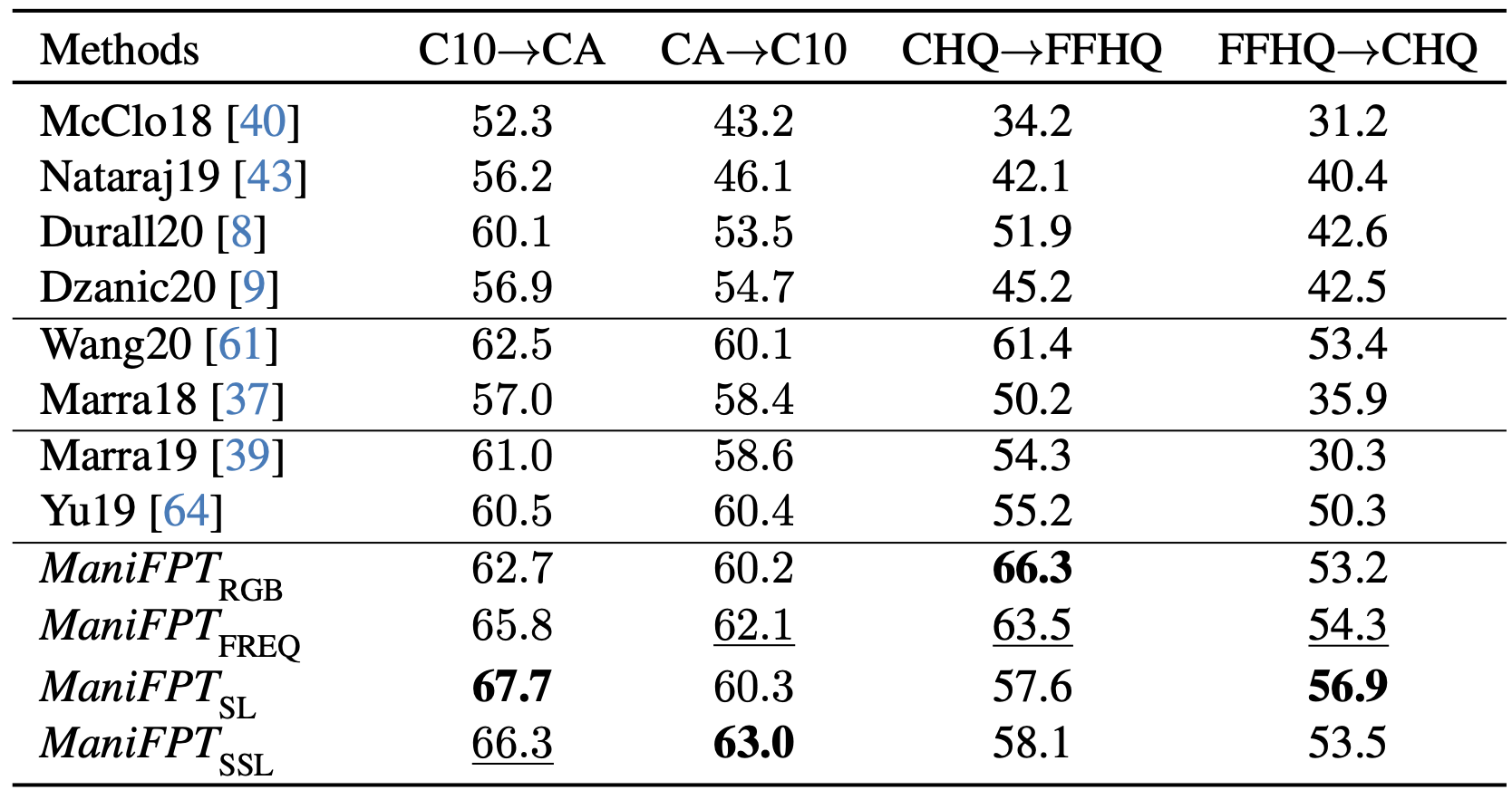

study its effectiveness in distinguishing a large array of different generative models. We find that using

our proposed

definition can significantly improve the performance on the

task of identifying the underlying generative process from

samples (model attribution) compared to existing methods.

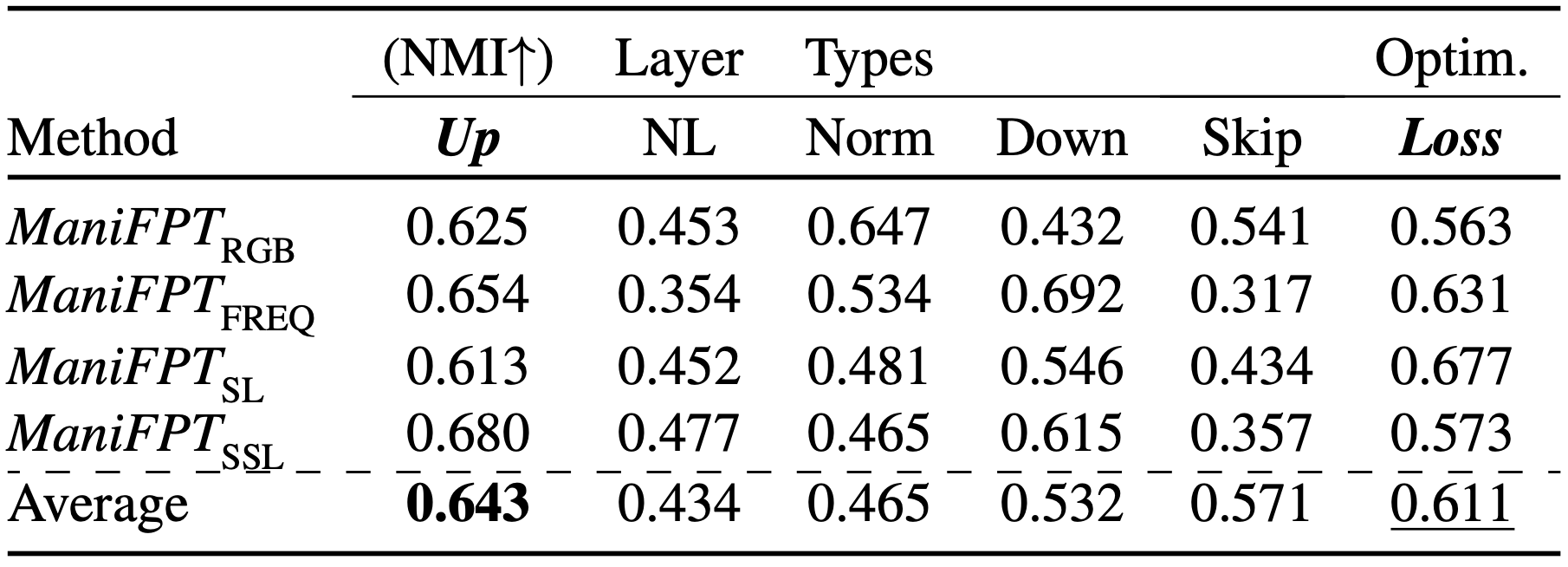

Additionally, we study the structure of the fingerprints, and

observe that it is very predictive of the effect of different

design choices on the generative process.